As I see it, there has been a huge revolution in economics over the past 35 years or so.

But it wasn't a change in the dominant econ theory. Theory has certainly changed, sure, but more by evolution than revolution - things like game theory and behavioral theory have slowly been mixed in with the old hyper-rational "neoclassical" paradigm.

No, the big revolution has been in the role of theory itself. Go back and read some old 1970s papers (I'm not going to link to any specific ones, following my policy of not singling out specific authors), and you'll be amazed at the disconnect from reality. A lot of those papers would take great pains to specify that the outcome space was assumed to be a Borel space, while spending precisely zero time discussing how the model could be tested against real-world data. This was econ's famed "deductive method" at its most arrogant. It really was enough to just show up, state your assumptions (i.e. herp your derp), solve the resultant math problem to get some nice-looking equations, and call it a day.

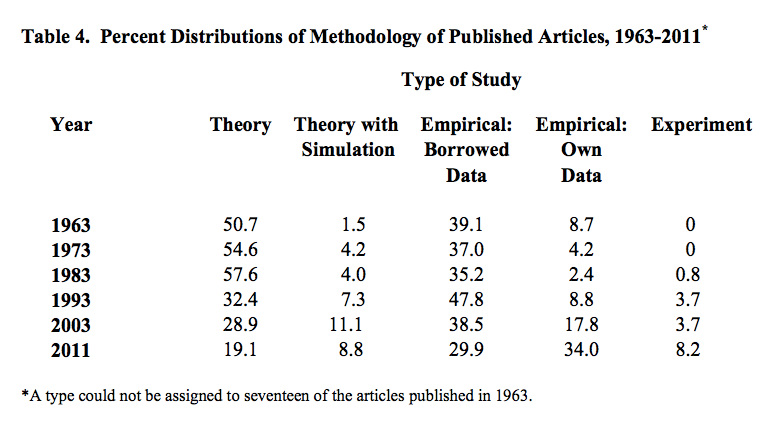

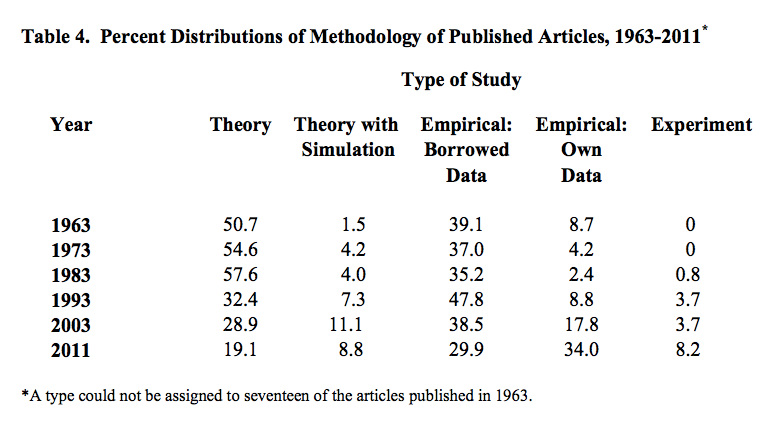

That doesn't seem to be so true anymore. Theory papers peaked as a percent of the total sometime between 1973 and 1993, and have been declining ever since:

That's a very dramatic drop. And notice that a lot more theory papers nowadays include simulation.

A lot of people can feel the wind changing. "Theory is kind of dead," Tyler Cowen once told me over drinks (beer for me, soft drink for him). "Nothing big is coming out of theory these days." "What about social learning and herd behavior?", I countered. "That was sort of the last gasp of theory," he said with a grin, "and it was twenty years ago."

Macro has been slower to embrace the change, because macro data is so uninformative that often all you can do is spin one theory after another. But macro also seems to feel the pressure. "We don't really need more macro theorists," a prominent macroeconomist once told me. "What we need are macro empiricists."

I call that a revolution. It's not quite a Thomas Kuhn style paradigm shift - more of a shift in the underlying criteria that the economics community is using for deciding how the world works. Deduction is on the way out, and induction is on the way in.

Why did this happen? I'm not sure, but my guess is that the meteor that hit the economics dinosaurs, and set the field's evolution off in a new direction, was named Daniel Kahneman. Starting in 1973, Kahneman released a torrent of papers showing that human behavior didn't look anything like the way that homo economicus was supposed to act. Other behavioral meteors followed. There was Richard Thaler, who showed how behavioral effects could mess up many of the decision-making processes in economics. There was Vernon Smith, who showed that even simple markets don't work the way economists long assumed. There was named Colin Camerer, who scanned people's brains and confirmed that non-rational decision-making processes were at work. There were many others. Really, it was a giant comet of Behavioral Economics that broke up somewhere in orbit and fell to Earth in many parts.

As the weight of evidence mounted, the economics community could no longer ignore the contradictions between theory and reality. But though they had found a swarm of factual anomalies, the behaviorists failed to produce a new grand theory to replace the hyper-rational paradigm their empirical work had smashed. Even simple, limited theories like Prospect Theory have struggled to make sense of the world outside the lab. The ship of economics hasn't so much been pointed in a new direction as set adrift without a compass.

Of course, this was probably inevitable. The old hyper-rational theory was unified only because it dramatically oversimplified the world. But it could only oversimplify the world because it refused to look at the world. As soon as someone came along and forced economists to look out at the world, most of the work that economists had done had to be discarded. (Not all, interestingly; the pure-rational-deductive approach has had a few stunning successes.)

So what do you do when you can't make sense of the world? You just keep trying to get a better picture of the world. My guess is that this is why econ is shifting from theory to empirics.

But I also see econ's shift as being part of a bigger, deeper change that is happening all across academia. In physics, fundamental theory has hit a wall. The Standard Model, completed in the 70s, has been well confirmed by experiments, and all the leading candidates for new physics seem to be untestable. Even in math, formal deductive proof is slowly being replaced by proof-by-computer; at some point the question of whether math is an empirical science gets harder to answer definitively. As for the humanities, "theory" has basically become a giant joke, even if a lot of leading scholars haven't gotten the joke yet. Meanwhile, a lot of the big revolutionary advances seem to be coming in biology, while the other "lab sciences" of chemistry, materials science, neuroscience and electrical engineering continue to rack up steady progress. And "data science" is considered the new frontier. Obviously all these fields make use of existing theories, but they rarely tend to cook up dramatically new theories, or to work in "deductive", theory-first paradigms.

Maybe humanity is reaching the end of a big Theory Wave. The late 19th and early 20th centuries saw such enormous victories for theory - Cities destroyed! Thinking machines invented! Logistics revolutionized! Chemistry reinvented! - that we poured a lot of our brainpower into sitting down with pencil and paper and working out mathematical ideas about how the world might work. Maybe we've temporarily exhausted the surplus gains available from ramping up our investment in theory, and the boom is quietly coming to an end.

But that's just my theory. To back it up, I'd need some better data.

Update: A number of people have suggested that the supposed decline of economics theory is really just an explosion of empirical work due to the decline in the cost of computing and the increase in the supply of publicly available data. I certainly do believe that a decline in the cost of computing has led to an explosion of empirical work. However, here are some points that suggest that the "theory is dying" hypothesis has some legs:

1. In the past, increases in the supply of data (from new experimental techniques and from earlier improvements in computing power) have sparked simultaneous booms in theory, as theory was needed to explain the new data gathered. I don't think we see that happening in econ.

2. Currently, experiments are rising as a share of published papers. The cost of experiments is mostly subject fees, not the cost of computing.

3. According to a similar study by Card and Della Vigna, the absolute number of papers published in top journals has fallen. this means that the total number of theory papers, not just the percentage, published in top journals has fallen. If an explosion in empirics due to cheap computing had simply outpaced a more gentle increase in theory papers, we would expect to see the top journals increase their number of papers, or for new top journals to emerge. Instead, neither has happened. In fact, the failure of the computing boom to lead to more top-journal papers is a puzzle in and of itself.

4. As seen in the Hammermesh study, the percent of top-journal publications doing empirical analysis on borrowed data has also decreased recently, despite the increase in the supply of such data.

5. According to Card and Della Vigna, The frequency of citations for "theory" papers (which means pure micro theory, and so doesn't include all theory) is falling relative to other fields.

Now, it may be the case that top-journal publications are simply a bad guide to how much is getting discovered. Much more good research may now be getting published in lower-tier journals. Or perhaps the fraction of truly important research in even top journals was always small, so trends in total top-journal publication might not reflect trends in truly important results.

In that case, all we have to go on are anecdotes and the zeitgeist within the profession.

Update 2: Paul Krugman's anecdotal evidence agrees with mine - theory is becoming less important in trade and macro. He has different hypotheses about the cause, however.

Update 3: Tony Yates (whose blog I just now discovered, thanks of course to Mark Thoma) disagrees, and thinks that macroeconomic theory is alive and well. I think he does have a good point; macro seems to have resisted the shift to empiricism more than the other econ sub-fields. Of course, Yates and I would probably disagree on whether or not that is a good thing.

Update: A number of people have suggested that the supposed decline of economics theory is really just an explosion of empirical work due to the decline in the cost of computing and the increase in the supply of publicly available data. I certainly do believe that a decline in the cost of computing has led to an explosion of empirical work. However, here are some points that suggest that the "theory is dying" hypothesis has some legs:

1. In the past, increases in the supply of data (from new experimental techniques and from earlier improvements in computing power) have sparked simultaneous booms in theory, as theory was needed to explain the new data gathered. I don't think we see that happening in econ.

2. Currently, experiments are rising as a share of published papers. The cost of experiments is mostly subject fees, not the cost of computing.

3. According to a similar study by Card and Della Vigna, the absolute number of papers published in top journals has fallen. this means that the total number of theory papers, not just the percentage, published in top journals has fallen. If an explosion in empirics due to cheap computing had simply outpaced a more gentle increase in theory papers, we would expect to see the top journals increase their number of papers, or for new top journals to emerge. Instead, neither has happened. In fact, the failure of the computing boom to lead to more top-journal papers is a puzzle in and of itself.

4. As seen in the Hammermesh study, the percent of top-journal publications doing empirical analysis on borrowed data has also decreased recently, despite the increase in the supply of such data.

5. According to Card and Della Vigna, The frequency of citations for "theory" papers (which means pure micro theory, and so doesn't include all theory) is falling relative to other fields.

Now, it may be the case that top-journal publications are simply a bad guide to how much is getting discovered. Much more good research may now be getting published in lower-tier journals. Or perhaps the fraction of truly important research in even top journals was always small, so trends in total top-journal publication might not reflect trends in truly important results.

In that case, all we have to go on are anecdotes and the zeitgeist within the profession.

Update 2: Paul Krugman's anecdotal evidence agrees with mine - theory is becoming less important in trade and macro. He has different hypotheses about the cause, however.

Update 3: Tony Yates (whose blog I just now discovered, thanks of course to Mark Thoma) disagrees, and thinks that macroeconomic theory is alive and well. I think he does have a good point; macro seems to have resisted the shift to empiricism more than the other econ sub-fields. Of course, Yates and I would probably disagree on whether or not that is a good thing.

"Even in math, formal deductive proof is slowly being replaced by proof-by-computer; at some point the question of whether math is an empirical science gets harder to answer definitively."

ReplyDeleteThis is definitely not true. Sure, some combinatorics problems are being solved with proof-by-computer. It's also true that many mathematicians use computers to run difficult computations to assist them in coming up with hyoptheses and ideas for proofs.

But for the vast majority of mathematicians, proving things the hard way -- without computers -- is the only way it works. In most fields (combinatorics is the main exception, and even there "computer assisted proofs" are kind of a niche field), the conceptual landscape is way too hard to navigate for a computer. At least for now ;)

I agree. This stuck out to me as completely inaccurate. Even combinatorialists primarily use computer programs to generate data that can be used to develop and prove standard, deductive theorems.

DeleteAs far as 'math as empirical science' goes, I'd say this is already a standard mentality in many mathematical areas. If the model simulates the data, does checking for uniqueness and existence of the solution to your differential equation really matter? Theory follows application in a surprisingly large amount of mathematical research.

Testing that you haven't accidentally generated a contradictory model (which will prove anything) is actually very important. That's one of the reasons you have to check for existence of the solution.

DeleteUniqueness? Well, think: does it matter whether this is a unique solution or not, in your application?

I am completely puzzled by the attempt to contrast between "deductive proofs" and "proofs by computer". Computer assisted proofs are deductive. And arguably more rigorous than human written proofs. Using computers to generate data in support of conjectures or to draw conjectures or to discover structure is an entirely different matter from computer assisted proofs. Mathematicians have made tables of calculations to test their hypotheses for ages, but no one ever called those tables "proof" and no one does now.

DeleteWouldn't better computer technology be a more straightforward explanation? I'm too young to have experienced it, but I guess that when running a regression involved submitting bunch of punch cards with Fortran code to an operator rather than typing reg y x into Stata, relative cost of empirical work was simply higher than it is today.

ReplyDeleteThat is important of course. But the proportion of empirical econ papers written with "borrowed" data is declining too. More people are creating their own data sets, which of course requires computer technology but also entails lots of other costs. Also, experiments, which are very very costly, are rising as a proportion of the total. So I think that this is much bigger than just the cost reduction from computers.

Delete"Starting in 1973, Kahneman released a torrent of papers showing that human behavior didn't look anything like the way that homo economicus was supposed to act. Other behavioral meteors followed. There was Richard Thaler, who showed how behavioral effects could mess up many of the decision-making processes in economics."

ReplyDeleteAlthough I don't deny the scope of Kahneman and Tversky's scholarly contributions, I must say...do you have no word for Allais (1953) and Ellsberg (1961)?

Herb Simon?

DeleteHere is a nice example of a new theory which explains the real world (though I admit there's more to be done).

ReplyDeletehttp://www.philipji.com/item/2013-07-03/two-measures-of-money-supply-1981-to-may-2013

It is nice to know that the computer understands the problem. But I would like to understand it too. - Eugene Wigner

ReplyDelete>> According to a similar study by Card and Della Vigna, the absolute number of papers published in top journals has fallen. this means that the total number of theory papers, not just the percentage, published in top journals has fallen.

ReplyDeleteOr... the "top journals" make up a less-important means of publication than they used to, so papers are migrating to other channels.

"Or... the "top journals" make up a less-important means of publication than they used to, so papers are migrating to other channels."

DeleteThis is going on. The good theories -- we're talking models for specific areas, not the Grand Unified Bullshit which was so popular for decades -- are often still blacklisted from the "top journals", but used in other journals.

The length of a typical paper has significantly increased. Maybe there are now more ideas per paper?

DeletePosted at 4:31am? Crazy New York econlife I guess.

ReplyDeleteHow would you or Hammermesh count structural modeling in trade or IO? Generally a structural paper will:

1. find some puzzling correlations in the data (In jargon, stylized facts. Never try to present an unstylized fact, it makes you look like a slob.)

2. Write down a standard model, but add some sort of gimmick which both keeps everything tractable, and will generate the data relationship.

3. Use some sort of moment fitting to get parameter values for the model.

4. Run counterfactuals.

The hardest part is the theory, because the standard models break if you start tweaking them. If you call structural modeling empirical, then I don't think theory is dead -- it is just hiding.

A second point is that you keep complaining about the assumptions of 1970's models -- people don't act like homo economicus. While that is true, I find the Akerlof market for lemons a pretty convincing explanation of why driving a car off of a lot causes the value to instantly drop. Whether a model is good or bad depends on the accuracy of its predictions. I agree with your critique of epistemologic game theory.

FYI: Blogger allows for scheduled posts. Also, 4:30 is 'alarm time' for some of us.

DeleteAny profession that can convince itself of something demonstrably idiotic--for instance, that Rational Expectations is in any way a representation of reality--is doomed for that generation and the one following.

ReplyDeleteIt's good to see that the damage may be fading, though I'm less sanguine about calling "simulations" an improvement than you appear to be.

OK, so what way of modelling expectations do you prefer?

DeleteMe jumping in here randomly...why not stochastic expectations? At any time, people's expectations differ from the model's implication, but the difference is determined by some stochastic process. That's different that RE, but expectations could still be unbiased in expectation.

DeleteWhat way of modelling expectations?

DeleteEither there is a better way to model expectations, or not. If there is, then use it. If not, then, in the face of the evidence, admit that one has no realistic model and stop turning the crank on a broken mathematical machine. This would have been (and still is) elementary intellectual hygiene.

BTW, for another "profession that [convinced] itself of something demonstrably idiotic", consider behavior_ist_ psychology, which denied the usefulness of the concept of "mind" or "mental model" or "cognitive process".

This idiotic yet academically dominant brand of psychology hindered progress toward behavioral economics. Its previous existence is the reason for the seemingly redundant term "cognitive psychology". See https://en.wikipedia.org/wiki/Behaviorism and https://en.wikipedia.org/wiki/Cognitive_revolution

Noah,

DeleteOK, but if the difference is a white noise, then for practical purposes how different is that from RE?

Anonymous,

lines like "admit that one has no realistic model and stop turning the crank on a broken mathematical machine" show ignorance of the scientific method. ALL models are unrealistic. You need to read Kuhn.

OK, but if the difference is a white noise, then for practical purposes how different is that from RE?

DeleteIt introduces exogenous belief shocks that can have real effects.

A sentiment model in finance will have what I'm talking about. It will produce excess volatility in asset prices.

DeleteOK, I'm down wit dat, I like it. But is that not building on some basic premises introduced by the RE revolution? Would we have gotten there without first abandoning models where people keep making systematic errors that can be perpetually exploited by policy-makers?

Delete"...excess volatility in asset prices."

DeleteSorry, but at my school we don't insert any modifiers in front of "prices" that could imply that the price is not always and everywhere correct. Don't you know that any observed volatility in prices is A REFLECTION OF FUNDAMENTALZ!!11!

"OK, but if the difference is a white noise, then for practical purposes how different is that from RE?"

DeleteEver read Hiawatha, mighty statistician?

@Ken Houghton: Rational expectations is an assumption that adds some sort of dynamic consistency to models in a tractable way. Don't criticize the assumptions, criticize the predictions. The failure of the rational expectations literature is that 30 years on, the state of the art DSGE models are no better at forecasting then purely statistical models like VARs.

ReplyDeleteI am not sure this is true. VAR's must come from somewhere. The question is, do atheoretical VARs (if there is really such a thing) do worse than DSGE-VARs? Here is a paper that says they do: http://econpapers.repec.org/article/eeedyncon/v_3a33_3ay_3a2009_3ai_3a4_3ap_3a864-882.htm

DeleteI'll bet you know better than I do -- I'm not a macroeconomist. I will say that the result tables 2,3,4, and 5 in the paper you sent support my claim that DSGE is no better at forecasting than VAR, and it is questionable if DSGE + VAR is any better than plain old VAR.

Deleteveryshuai,

DeleteI am not sure what you mean by DSGE vs DSGE + VAR. The columns compare performance of VARs whose restrictions come out of different DSGE models vs. VARs that have no theory backing them up. The paper does not compare these VARs to simulations. Moreover, as columns 5 to 8 show, for relatively short horizons the forecasting errors of the DSGE-based VARs are smaller (the entry is less than one) than those of the unrestricted VAR. So I do not see the failure that you are talking about. In fact, and interestingly enough, the best-performing VAR is the one based on the much-criticized RBC model.

Of course, this is not to say that we have grabbed the Pope by the balls. But I think there is some promise in a research agenda that is still relatively young.

Fair enough, I'll keep an open mind.

DeleteI read somewhere that this phenomena was a manifestation of replacement of (Platonic) Realism, exemplified by the neoclassical programme, with Logical Empiricism, exemplified by the Behaviourists. i.e. D. Arnold and F. Maier-Rigaud. The enduring relevance of the model Platonism critique for economics and public policy. Journal of Institutional Economics, 8:289—294, 2012.

ReplyDeleteI wonder why it took so long in economics. In other sciences, that particular philsophical change was definitely done by the end of the 19th century.

DeleteOver in humanities-land, arguably Theory has also run into a wall. Wonky post-modern babble is stale and passe, much more interesting are things like Moretti's "distant reading" (e.g. stats on what kind of novels were released when).

ReplyDeleteGeorge Scialabba made the point some years ago that there isn't that much point in writing about the broad issues of international relations these days, because they haven't really changed since George Orwell wrote about them-- much more useful is to detail-oriented guys like Noam Chomsky who try to get a picture of what's actually going on.

-- Joseph Brenner

I think the pendulum has swung too far away from theory, though. For example, in labor, development, and similar fields much empirical work seems to be devoid of serious economic theory. Modern treatment-effects estimators, regression approaches that simply "control for all other factors", etc. strike me as purely statistical approaches that tease information out of the data. But as Heckman and others have argued much better than I, an anonymous troll, could, it's hard to take policy recommendations seriously without a strong theoretical foundation.

ReplyDeleteI agree with Ivansml, better computing is a big part of the explanation. Noah's points are good but can be explained because researchers are now competing (more) on the "data" dimension rather than the "theory" dimension and so have to find better ways to source and use data.

ReplyDeleteAn interesting related question is why theory had such a strong run in so many fields at the same time (I agree with Noah's history on that). It seems that intellectual practices for formalization diffused through academia beginning in the late 1800s (not just math, but a lot of related ways of building theory that engaged with disciplinary issues). This was even the origin of "theory" in the humanities, with the early structuralist linguists. (Though that certainly took a bad bounce.)

Alas I've never seen a good cross-disciplinary study of how those practices diffused or even just what they were. I guess they weren't just ways of building formal models, they included rhetorical practices for making non-formal or anti-formal work look passé or stupid.

My guess is the transition was a combination of (1) decreasing net reputational returns to the formalist program (harder to get results, critics getting better at finding weakness, increasingly obvious distance from any useful engagement with reality), (2) rapidly increasing reputational returns from adopting computational methods (including theoretically driven techniques like the Metropolis algorithm) and (3) the kind of strong internal critiques that Noah describes.

Unfortunately strong critiques are almost never enough (as Kuhn says). A good example is Lashley's critique of the behaviorist program -- it was empirically extremely strong, theoretically devastating, well within the existing experimental paradigm, etc. but had essentially no effect, until decades later the disciplinary consensus was weakened by other factors like (1) and (2) above.

CA,

ReplyDelete"OK, so what way of modelling expectations do you prefer?"

Even in the limit of unbounded powers of observation it is not *in principle* possible for a rational Bayesian observer to discover the drift rate of a Brownian motion. This means that in a trivial model economy with stochastic income volatility, it is not in principle possible for agents to know the expected variance of income over a finite period of time, which means that posterior distributions of asset returns are hopelessly fat-tailed relative to their God-given-knowledge ratex equivalents. Even when priors are normal!

Rather than assuming that agents have God-given knowledge of the parameters of the model dynamics, isn't it far more sane to assume that they have to use their (infinite!) powers of induction to figure them out? It seems to me that Ken Houghton is pretty accurate in calling "demonstrably idiotic" the claim that "Rational Expectations is in any way a representation of reality". It seems more like a religious belief.

So the answer to your question, in my case, is rational Bayesian agents with unlimited powers of observation. For starters. Then, if necessary, start thinking about the limits of rationality and availability of information.

The idea that rationality implies ratex is a hoax.

K,

Deletethere is also no guarantee that markets ever move towards or reach equilibrium. We circumvent this problem by assuming that markets are cleared by a Walrasian auctioneer who does not permit any transactions to take place at non-equilibrium values. Clearly, such an auctioneer does not exist. Despite this unrealistic assumption, in many instances the standard supply/demand framework yields useful predictions. In others it does not. The point is that, if you can model expectations in a way that yields more accurate predictions, the profession will listen. RE was an improvement over the crude Keynesian paradigm of naive agents being fulled systematically by policy makers. I sure hope, however, that it is not the final word!

Do you want to model expectations realistically? Go over to the Marketing department and you'll find out how expectations are created.

DeleteNoah,

ReplyDelete"It's not quite a Thomas Kuhn style paradigm shift - more of a shift in the underlying criteria that the economics community is using for deciding how the world works."

I think that's interesting. In fact, I'd describe the ratex revolution as more akin to a purely Kuhnian (in the original sense before Kuhn changed his mind) scientific revolution, in which we have a huge sociological shift in the research community without any empirical support that it actually constitutes progress. The shocking thing about ratex is that it isn't even a necessary consequence of perfect rationality and perfect powers of observation, yet by 1980 non-ratex papers were basically unpublishable in top journals.

In the absence of good data, of course, this kind of pure theory "science" occurs all the time (witness the Cartesian solar system), and all fields of science have experienced tug-of-wars between theorist puritans and proudly innumerate empiricists. Every once in a while a Newton (or in this case, something that will follow from the works of Lucas, Woodford, Kahneman, Weitzman, Minsky, etc) comes along and knocks some heads together.

"Maybe we've temporarily exhausted the surplus gains available from ramping up our investment in theory, and the boom is quietly coming to an end."

There are quite a few extremely important insights that have yet to be integrated into a coherent framework. While we don't need everyone doing theory, we definitely need some big thinkers to do some very heavy lifting. I had a supervisor who would tell aspiring theoretical physicists, "Great. Every generation needs one or two of those!" But unfortunately we don't know which aspiring young theorist it's going to be, so it's probably not a bad idea to throw a lot of them against the wall and see what's going to stick.

In fact, I'd describe the ratex revolution as more akin to a purely Kuhnian (in the original sense before Kuhn changed his mind) scientific revolution, in which we have a huge sociological shift in the research community without any empirical support that it actually constitutes progress.

DeleteYes. But remember that Kuhn doesn't specify what "progress" means, and says that different communities may have different criteria at different points in time. He sort of assumes that validity is always going to be a criterion, but says that aesthetics will also usually play a role. Well, what if a community decided that aesthetics (or political aesthetics) was the only criterion that mattered?

CA,

ReplyDelete"OK, but if the difference is a white noise, then for practical purposes how different is that from RE?"

Not that I'm an advocate of Noah's proposal (it sounds like a hack!), but I don't at all agree that it would make little difference. You should read Peter Howitt's, "Interest Rate Control and Nonconvergence to Rational Expectations," in which he shows that all of RBC macro is critically dependent on perfect ratex. Any deviation from the ratex equilibrium, no matter how small, leads to a Wicksellian runaway cumulative process under very general assumptions. (And we know the deviations from ratex aren't small!) Presumably Noah's framework would capture such instabilities.

PS. Sorry for commenting down here. My phone browser doesn't permit commenting inline.

You are blogging from your phone? And I thought I was addicted to blogging! :)

DeleteCA,

ReplyDelete"RE was an improvement over the crude Keynesian paradigm of naive agents being fulled systematically by policy makers."

I'll agree that it was the first step on the road to something better. However to decide whether RE itself was an improvement over AE, you need to know whether AE underestimates the processing power of the market by more than RE overestimates it. Because the early evidence of Bayesian learning models is that posterior distributions are extremely broad (i.e. it is questionable whether moments exist and the central limit theorem even applies), it seems likely that huge expectational shifts (animal spirits) are possible (and rational). Ultimately, I wonder if, at the end of an incredibly complex theoretical journey, we will end up back somewhere in the vicinity of the General Theory. That doesn't mean *at all* that I am questioning the value of the journey. We will never know what we know if we don't know why we know it. But it does mean that I'm very skeptical of any claims derived from the religion of ratex.

"I sure hope, however, that it is not the final word!"

To me, RE is mostly just a silly toy. Outside of actually complete markets (possibly some derivatives markets), I don't believe it makes any useful predictions.

"You are blogging from your phone?"

[Does anyone know how to "reply" in blogspot from an iPhone?]

I wonder if the rate of change also tells a story, or if it is just noise? The most rapid drops in theory papers are in the 1983-1993 period and in the 2003-2011 period. The 1983-1993 period nicely fits with your behavioral economics hypothesis, but then the rate of change plateaus. Maybe the economic crisis contributed to the second leg down between 2003 and 2011, serving as another indicator of the gap between theory and reality? If that's the case, most of the change would have been in the 2008-2011 period.

ReplyDeleteI'm not an expert on the history of economic thought, but my impression is that the Great Depression also contributed to a Kuhnian paradigm shift.

I really doubt that there were many (if any) papers talking about Borel spaces in the general journals (AER, JPE, QJE, etc) back then. If you're talking about JET or JME (is this Borel stuff about one of Aumann's papers?) then yeah sure, but there's a reason why those journals have the words "Theory" and "Mathematical" in them (throw in Econometrica in there too).

ReplyDeleteBut the general observation is correct - much much less theory today. Still, I think Economics is actually the worse for it because the pendulum has swung way too far in the other direction. You can't have empirics without theory. Empirical work follows theory. Facts never speak for themselves (as Dr. McCoy pointed out). Theory both constrains empirical work and is the thing that actually allows you to make sense of the data. Without it you just get some data-mined regression with ad hoc specification buttressed by a half-baked just-so story (incorrectly referred to as the "theory") that would make a sociologist blush, which at best documents an already intuitive correlation in the data, or in other words, "Applied Micro" (not gonna name specific names either).

I do like lots of stuff in Experimental Economics, but let's not oversell it. There are obvious limitations to what it can do, both ethical and practical. And I'm not just talking about the obvious "you can't halve everyone's income to see what happens" kind of limitations or external validity. Some theories just can't be tested in the lab. For example, wow would you run an experiment to test Becker's hypothesis that fertility first falls then increases with income? With rats? Or that homicide rates fall with income, while controlling for lead intake? For a lot of questions of interests all you gonna have is real world data, and as I said, to make sense of that, you need good theory.

Lastly, I should clarify that there's big-T theory (theory proper) and little-t theory (models). There's a place for both (big-T theory clarifies the assumptions, establishes connections and generalizes various little-t theory models), though personally I'm a fan of what Solow called the MIT tradition of "small, crisp, models" that focus in on a question and come up with a precise, formal description of the phenomenon.

that's why it's hard to apply the same rigor of a 'hard science' to a social science. like applying physics to astrology. the result is often crap (R&R debt paper or the 2001 Steven Levitt abortion crime paper)

DeleteCA,

ReplyDelete"Despite this unrealistic assumption, in many instances the standard supply/demand framework yields useful predictions. In others it does not."

There is a difference between your examples and ratex. In your examples one could at least *imagine* a mechanism by which markets would find a way to clear. That is the essence of the Lucas critique: don't assume that markets will permit an arbitrage (even a statistical arbitrage) to persist. That is a *great* point and it's a valid criticism of a lot macro theory (e.g. the Calvo price mechanism hack).

But, there is no mechanism whereby economic agents can just know the parameters of the model in which they live as specified by the modeller, and there is no *conceivable* way for markets to find the ratex equilibrium, even in the perfect agent limit. That's a fundamentally different category of error. Even then, it might be excusable if the consequences were small, but unfortunately they are positively huge, in some cases breaking the assumptions of the central limit theorem.

The problem is that people who dispute ratex, and the prescriptions of ratex models, are typically accused of denying rationality (favouring paternalism), as if ratex was a logical consequence of the Lucas critique. It isn't.

"In your examples one could at least *imagine* a mechanism by which markets would find a way to clear."

DeleteYes, rational expectations is precisely such a mechanism, particularly when information is asymmetric. Get rid of it, for example by assuming bounded rationality, and the competitive equilibrium is no longer guaranteed. Now, that's fine by me, as long as one realizes that the two go together.

So we always needed more empirics but it was too costly.

ReplyDeleteNow the costs of gathering and analyzing voluminous data have fallen and we are getting to where we need to be in terms of the ratio between theory and empirical work.

What are the odds we overshoot in the empirical direction?

I.e. young economists see the empirics boom, concentrate on learning the econometrics and the Python scripting and we end up with a generation of economists who can't do good theory.

And they just do experiments all day and produce false positives.

Anyway, Noah could you please get normative and tell us if you think this is a good thing?

What's the right theory/empirics ratio?

What this guy said.

Delete"2. Currently, experiments are rising as a share of published papers. The cost of experiments is mostly subject fees, not the cost of computing."

ReplyDeleteRegardless, paying subject fees is likely more desirable if the overall cost of experiments is falling, no? This could hold for researchers and for the pursers of giant bags o' grant funds.

'social science' is an oxymoron

ReplyDeleteYou, sir, are... aw, it's just too easy.

Delete1. There's been a lot of theory in econometrics.

ReplyDelete2. There are several areas of economics that are screaming for theory, but no one has taken them up (successfully). In my view, international trade needs an entirely new framework that integrates with international finance. Lots of work to be done there.

3. I would generalize Noah's point about behavioral econ. A lot of economic theory has just stumbled in the face of evidence. This wasn't so much of a problem back in the day, because there was a lot less evidence. Now it's unavoidable. People are doing just-so tweaks to fit to particular empirical results, but this is not theory in Noah's sense, and rightly so. We may need some methodological earthquakes to realign the plates and get the theory thing cranking again.

A lot of economic theory has just stumbled in the face of evidence. This wasn't so much of a problem back in the day, because there was a lot less evidence. Now it's unavoidable.

DeleteI agree completely. What's interesting is that new theories aren't really emerging that make sense of any but a small part of the new data. Theory is just getting clobbered. Almost any theory you make these days in econ is bound to be decisively refuted once someone checks it with data.

We may need some methodological earthquakes to realign the plates and get the theory thing cranking again.

Well yeah, we do need that. But that doesn't mean we'll get it! Psychology has been cranking out the empirical results for decades, and still doesn't have that many working theories to show for it...

"Psychology has been cranking out the empirical results for decades, and still doesn't have that many working theories to show for it..."

DeleteIt actually kind of does. It has lots. They're all just very *limited in application*.

This is a bit like physics back when optics and mechanics were two totally unrelated subjects -- and the optics theories weren't even consistent with the mechanics theories. Or perhaps it's like an even earlier, even more "small model" stage of physics.

It's a good developmental stage to be at. After 100 years, there will be enough "small models" of local application to start actually constructing some Big Theories.

Nice article, I like the table with the publication statistics.

ReplyDeleteBut you miss a very crucial point.

Between the 40s and the 70s most of the theoretical work had the purpose to check thoroughly the properties of micro and macro models. Make clear the math and intuition behind them.

There have been long discussions about the stability and multiplicity of equilibria, properties of demand schedules etc etc. Quite boring I have to say, but necessary. Do you think the Mas-Colell book comes from nowhere? 1000 pages collecting results of hundreds of publications.

A massive amount of fine tuning that allowed the theory to be ready and be used today for empirical work.

This is a good point. We had a lot of "stored up" theoretical results that are now being tested.

DeleteWell..."now" might be an overstatement. We've had quite a while to test all those old theoretical results, and few have withstood the test.

That's correct and it probably explains the rise of "Theory+simulations" papers, which try to be right at the first shot.

DeleteI would also say that "Econometrics" has built up most of its methodological foundations in the last 20 years (panel data, cointegration, etc..). A lot of theoretical work that should be counted as such, but probably is regarded as empirical. Those publications play the same role of the older ones I was talking about because they mostly focus on methodologies.

I don't fit any of Noah's id choices!

DeleteThere's one other aspect of this that I think is extremely important: the advances in causal analysis of observational data. Recently, the proliferation of techniques and available software for examining causal relationships in large-scale datasets rigorously has been both surprising and gratifying. It makes the entire business of the social sciences nearer and nearer (largely because the physical and life sciences are doing the same thing) to the usual ideal elsewhere: "Why think? Do the experiment!" Combine that with massive datasets that are available to test assertions and you get a real sea change in how people do business.

Maybe all economists should use this summer holiday to reread the Foundation trilogy by Asimov. One of the conditions for the validity of predictions made by using psychohistory was that the population concerned did not have any knowledge about psychohistory. It was therefor carefully omitted from the Encyclopedia Galactica.

ReplyDeleteI think Asimov got this part of the problem right. So please - stop publishing your economic theories. Publishing makes them useless.

Maybe you - and non-economists - should use this summer holiday to read some Economic papers.

DeleteThat was a very good read, Noah, thanks! One thing that I would add is that nowadays a lot of empirical work is much more theory-based than it used to be. (This is also a point made by Ken Judd!) Even in experimental economics, I occasionally see people fitting pretty complicated structural models to experimental data. The same thing can be seen in many other fields, such as industrial organization, macro, etc.

ReplyDeleteTrue! That's formally similar to "phenomenology" in physics.

DeleteCA,

ReplyDelete"rational expectations is precisely such a mechanism, particularly when information is asymmetric. Get rid of it, for example by assuming bounded rationality, and the competitive equilibrium is no longer guaranteed."

Except, that's my point. RE is not a mechanism. Rational choice is a mechanism. RE means rational choice *plus* knowing the structural parameters of the model economy. Lucas never explained how the agents knew the structural parameters, and subsequent research has revealed that there is no way they could find them out using econometrics, even given unlimited powers of observation. So RE is fundamentally flawed, exactly because it is *not* a mechanism. Without knowing the structural parameters, equilibrium depends on the Bayesian priors (subjective expectations) of the agents. So you don't need to assume rationality is bounded to get non-unique equilibria. You just need to assume the agents don't talk to God.

K,

Deleteyes, it is a mechanism! Suppose that on August 31st you try to explain why the market price and quantity of bicycles were higher in August than in July. You draw a demand curve and a supply curve, mark the point of intersection, and try to figure out which of the determinants of demand changed. Is doing so legitimate?

What you have actually done is to aggregate a sequence of thirty-one temporary equilibria, which may vary if daily market conditions are random. If people have full information regarding the state of the market when they conduct their daily trades, then your method is legitimate. If, however, people don't have full information, then it is not at all clear that the average monthly price and the traded quantity will correspond to the point of intersection of the monthly (or yearly, or longer-run) demand and supply curves. It all depends how people figure out which state the market is in when they trade. Assuming that people do so in a RE manner gets you there, since any daily errors are random and therefore cancel out. Get rid of RE and the price need not converge to its equilibrium-supply and demand analysis becomes largely irrelevant. You want to get rid of RE, be my guest, just understand that the two go together.

Also, I am not sure what "subsequent research" you have in mind, but there are many papers who show that actual forecasts converge to RE forecasts under a variety of learning rules. Here are some examples:

http://www.sciencedirect.com/science/article/pii/0304406887900152

http://www.nber.org/papers/w12441

The price does not converge to the "supply-demand equilibrium", ever. This is empirically proven. So, yeah, rational expectations belongs in the trash heap.

DeleteSupply and demand analysis is still useful. What isn't useful is *equilbrium analysis*. Supply and demand analysis is best used in the form of analyzing the directional effect of shocks, for which it is highly effective.

From Barry Ritholz's blog, The Big Picture:

ReplyDeleteIssues of Economists & Economics

1. Economics is a discipline, not a Science. Physics can send a satellite to orbit Jupiter, Economics cannot tell you what happened yesterday. This is an enormous distinction, and has led to a) the “Physics Envy,” and b) an unnecessary emphasis on mathematical complexity.

2. Models are of limited utility. People forget that (as George Box has noted) models are imperfect depictions of reality. If you become overly reliant on them, you encounter a minefield of problems. Several analysts have told me that if the Fed cannot model something, than to them, it does not exist. Think about the absurdity of that viewpoint — and its impact on policy.

3. Contextualizing data often leads to error. This is more complex than it appears. What I mean by this is that everything that economists consider has to be forced into their intellectual framework; since everything is viewed through the imperfect lens of Economic Theory, the output is similarly imperfect — sometimes fatally.

4. Narrative drives most of economics. This is the corollary to the context issue. Everything seems to be part of a story, and how that story is told often leads to critical error. Think about phrases like “stall speed”, “second half rebound”, “muddle through”, “Minsky moment”, “austerity”, etc. All of these lead to rich tales often filled with emotional resonance.

5. Economists are loathe to admit ‘They Don’t Know.’ This trait is common to many professions, but I suspect the modeling issue may be partly to blame. Whenever I see forecast written out to 2 decimal places, I cannot help but wonder if there is a misunderstanding of the limitations of the data, and an illusion of precision. To paraphrase, “Only the people who understand both the data and its limitations will not get lost in the illusion of precision.”

6. A tendency to confuse correlation with causation. This is one of the oldest statistical foibles known to mankind, and yet economics remains rife with it at the highest levels. Look no further than the Fed’s obsession with the Wealth Effect for a classic correlation error; I shudder when I think about what other arenas they are fundamentally lost in.

7. The Peril of Predictions. I cannot figure out why economists seem to be so wed to making predictions, given how utterly miserable they are at it. Items 1 and 5 might be a factor.

8. Sturgeon’s Law: Lastly, there is a wide dispersion of talent in Economics, and following Sturgeon’s Law, many of the rank & file are simply mediocre.

http://www.ritholtz.com/blog/2013/08/blame-the-economists/

Who? Who cares?

DeleteYou did!

DeleteWell, maybe rational theories were not that successful at explaining data in the past, which were the times when people were driven by various behavioral biases but did not realize how exactly they were biased.

ReplyDeleteNow, as behavioral economics becomes widely taught, people are expected to have a good understanding how exactly they are "not perfect" and how to "unbias" themselves. As more and more people will do so, rational theories may well become quite relevant.

Another possible factor: the fall of the soviet union. That probably sounds outlandish, and I certainly think that increased computing power has more to do with it, but you might consider the fact that for over a hundred years before 1990 or so the world had a very vigorous, basically non-stop debate over how to see the world (The Age of Theory that Noah describes), and that much of this debate was related either directly or indirectly to the capitalism/communism divide. Once the USSR ended, that debate basically stopped (a remarkable fact, but I think true). The competition between the two political systems may, in retrospect, have been fueling a lot of the theoretical debate in many fields, and when that competition ended, the fuel was removed. It's not surprising, then, that economics might see a steeper drop in theory than other fields, and the timing fits the publication data in your chart...

ReplyDeleteWhat a bizarre reading. The main period when theory started to decline in that chart was 1983-1993, and that certainly wasn't a big time for behavioral non-theory works. I can't think of any empirical behavioral papers from then, and we can see that experimental wasn't that popular, either. When I think about the really good empirical papers from that period, I think of something like the Mariel boatlift paper, which would be a huge stretch to call behavioral.

ReplyDeleteBy the way, I don't think I buy the "increased computational power" argument fully, either. It certainly applies now, but was computational power that strong or data access that great from 83-93?

Good thing you are anonymous, anonymous, since you are exhibiting appalling ignorance. Some of the most important of experimental papers with strong beahvioral results appeared in this period. Two are the original ultimatum game paper by Guth et al in JEBO in 1982 and another is the 1988 paper in Econometrica by V. Smith et al showing how deeply entrenched is the tendency for people to generate speculative bubbles, even when fundamentals are clearly known and there is no uncertainty.

DeleteBarkley, if you see above, I noted that experimental work was clearly a small share of the decrease in theory, given the chart. You can see that theory as a share drops from 57.6 to 32.4 from 1983 to 1993, but experimental work only goes up from 0.8 to 3.7. It's clearly not true that behavioral work's rising caused the "death of theory" that Noah describes.

DeleteI realize the "behavioral non-theory works" may have been interpreted differently from how I meant, but you're clearly being unnecessarily obtuse.

On one hand, I am inclined to say, "long overdue." The disconnect between theory and how the economy really works, especially in macro, in part of what kept me from getting a PhD in the field, despite seriously considering it in the late 80s/early 90s as an undergraduate major, again as a graduate school and finally, after law school. I have great academic interest in economics, but had no interest in the deeply flawed monetary policy dominated models of professional economists at the time.

ReplyDeleteOn the other hand, what passes for empirical work these days is still far too narrow. A very large share of the total empirical output is fiddling with a dozen, national level, macroeconomic statistics (GDP, unemployment, measures of money supply, national accounts kept by Fed, inflation by one of several measures) from the same U.S. government source and now and again comparable data from a few other OECD countries.

There are huge blind spots. Pre-Financial Crisis, almost nobody was studying systemic risk and few people are even today. Virtually no attention was given to studies on the impact that a housing market bubble collapse in a tiny number of markets could have on the national and global economy. Virtually nobody was working on defining the boundary between business as usual economic data and the extremes of indicators that would push the economy over the edge. There is lots of welfare economics in tax law but very little on the impact tax law has on how robust our economy is during a downturn.

Economists are also stunningly ignorant of the mechanics of the nuts and bolts transactions that go into our economy from how securities brokers behave, to how banks handle foreclosures and REOs, to how closely held businesses handle employee compensation, to how real estate developments are organized, etc. Who is out there doing the ethnographic work, documenting trends in how transactions are conducted, etc. ? Business journalists and investment bankers usually understand how the economy works better than PhD economists, which is a terrible indictment of the profession. Somebody needs to do more botany grade descriptive economics, and less equation fiddling. Economics often act like appraisers who claim to be able to value real estate right down to the last 77 cents on a six million dollar parcel.

Until economists are asking the right questions and looking at the right kind of empirical data, their efforts are for naught.

Empirical data was better-researched in the *19th century* than it is today. In the 19th century, economists paid attention to actual production levels of specific individual goods; railcar loadings; factors of production for each major item in the economy; etc. I don't see a lot of that stuff. Most macroeconomists are playing with vague aggregates, and most microeconomists are looking at overly narrow situations without enough cross-comparison.

DeleteExcessive corporate secrecy is one part of this: it may be hard to collect the data. :-P

I tend to agree with Krugman. Sensible people were never crazy enough to believe that they were rational. Notably 1973 is also the year of the rational expectations revolution. Basically there wasn't a consensus that rationality should always be assumed which was disrupted starting about then. There was the belief that it is sometimes useful to think about models with rational agents and sometimes not. The rational expectations bubble was the anomaly not the current view.

ReplyDeleteI think the failures of theory in Economics and Physics are opposite. The problem with particle theory is that a decades old model fits the data perfectly (except for the detail that there is gravity). The standard model has testable implications which have been tested and confirmed. Economic theory doesn't really have testable implications (all implications come from simplifying assumptions that they theorist doesn't take seriously). Economic models (with those assumptions) can be tested and are always rejected. No one cares as everyone knew they were false. The problems are opposite. A theory in physics killed theory, because it is so successful. That sure isn't our problem.

Math is something else entirely. It isn't supposed to be about the world in which we happen to live. Math can keep going along. The problem is that there is way too much of it already even for specialists to understand each other's papers. It was killed by success. There is so much already no one can handle any more. Again not our problem. We have plenty of interesting unsolved problems. We just don't have any answers.

Also by the way, two weeks ago Andrei Shleifer told me that theory and macroeconomics are dead.

Noah, I am worried ... I remember teaching you in the first year graduate micro theory class, and at that time you still seemed very involved and active in theory. Was it my class that convinced you that theory is dead? You shouldn't generalize from my class....

ReplyDeleteAside from this, when discussing whether theory is dead, theorists have obvious conflicts of interest. So, they better leave the discussion to others.

Tilman! No, it definitely wasn't your class that convinced me of this...and I'm not totally convinced, it's just a hunch. Blame Miles, anyway.

DeleteBut note that theory can "die off" because of failure, but also because of success (as in physics). From what you've told me, game theory is getting into harder and more esoteric stuff, where it's harder to make progress, but that may just be because they've solved most of the simple stuff.

Also, as for my own interests, I'm sure that they're more theory-oriented than the average economist. I still want to do theory! I am just realizing that I might be swimming upstream by doing it...

Three comments that I have not seen yet.

ReplyDelete1) Even though I am Editor-in-Chief of the new Review of Behavioral Economics (ROBE), I am willing to say that theory can still play a role in behavioral econ. Thus, Aumann and others have been integrating Simonian bounded rationality into game theory. One can argue about how this is being done (and I suspect that Herb might not have approved of it), but at least some forms of behavioral econ are not completely in conflict with theory, at least a broader perspective on theory.

2) It is possible that the slowdown in theory reflects a broader process in science that Tyler Cowen has argued may be partly responsible for the Great Stagnation, and which John Horgan, former editor of Scientific American, went on about in his 90s book, The End of Science. His argument was that the most fundamental things in science have been discovered, leaving less exciting and productive things for current scientists. In physics it was general relativity and quantum mechanics (with string theory vs such rivals as causal structures triangulation not empirically testable for now), and in biology it was evolution and the discovery of DNA.

So, in econ it was the discovery by von Neumann, extended by Nash, of the usefulness of fixed point theorems for proving general equilibrium existence, not to mention game theoretic equilibria. There may simply not be any further theoretical results left to discover of this order of magnitude or importance, despite the abject failure and irrrelevance of DSGE models.

3) Of course, one could go to the foundations of GE theory and worry about constructivist math and its implications for fixed point theorems, but this leads to a computable if not computational approach. Unanswered in this discussion is where simulations and ABMs fit in to this ongoing picture, although we know in math increasing such methods are being used to prove theorems.

Barkley Rosser

There are vast realms of opportunity for fundamental work in econ, but it's going to be of a radically different sort. We have to actually start understanding mass psychology before we can get decent fundamental discoveries in econ.

DeleteI tend to think that math is of relatively little value in economics (as contrasted to physics, for example), and I say this as someone trained in mathematics by one of your students.

Nathanael,

DeleteI take your comment seriously. What is going on here is a transdicslipinarity, Certainly psych is a major player, but a lot more is going on here. For example, defenders of neoclassical orthodoxy simply say "add social preferences" or whatever to utility functions,and, hey, back to normal,although at a minimum once one does that one moves from impersonal GE to game theory, at a minimum.

CA,

ReplyDelete"It all depends how people figure out which state the market is in when they trade. Assuming that people do so in a RE manner gets you there, since any daily errors are random and therefore cancel out."

I'm assuming no measurement error and no market frictions. Take a path of a drifted Brownian motion. Make as many observations of that path over a certain period of time as you want. Start with some prior distribution for the volatility and drift of the process. In the limit of infinite number of observations, the posterior for the volatility converges to a point (great!) but the posterior for the drift does not. If you need to form expectations about the future about that (very trivial!) process, your subjective measure will necessarily be less informative than the RE measure. There is no way for you to know the RE measure, in principle.

Under very simple models of aggregate income, the lack of convergence to RE is fairly catastrophic, and easily accounts, for example, for the equity risk premium. (No need for fragile Peso problem fittings).

The best references I know of are:

Geweke, "A note on some limitations of CRRA utility,"

Jobert, Platania, Rogers "A Bayesian solution to the equity premium puzzle"

Weitzman, "Subjective Expectations and Asset-Return Puzzles"

These papers don't even consider the feedback you would get to the income process from the capital market uncertainty in general equilibrium. Surely things get even worse.

K: "In the limit of infinite number of observations, the posterior for the volatility converges to a point (great!) but the posterior for the drift does not."

DeleteIf I may ask, are you sure about this? Let's say I sample the path at intervals of fixed length, then increments will be just iid samples from normal distribution, and my posterior will converge to true values (of both mean and variance) as sample size goes to infinity.

If you instead mean sampling more and more points within a fixed interval (i.e. at higher frequency), then maybe you're right, but I'm not sure why such sampling scheme should be more relevant.

The papers you cite are about impact of learning after finite period, when posteriors have not yet converged (so predictive density is fat-tailed due to parameter uncertainty). Since the economy has indeed existed for only finite time, this may be of course important if it leads to quantitatively significant deviation from RE results, but it's not the same thing as proof that there is "no way for you to know the RE measure".

And the 600-pound man decreed that running is dead.

ReplyDeleteFun fact:

ReplyDeleteAssume you know the volatility but not the drift of a Brownian motion, and you observe a path over some period. Assume also you have some prior for the drift rate. The posterior drift rate is a function only of the prior and the difference between the beginning and end point of the path. Observing more points in the interior of the path adds no information.

Stephen: "And the 600-pound man decreed that running is dead."

ReplyDeleteFight! Fight! Fight! Fight!

ivansml,

ReplyDelete"Are you sure about this?"

Yes. I said "Make as many observations of that path over a *certain period* of time as you want." Not, "sample the process over an arbitrarily long period."

So I think we agree.

"I'm not sure why such sampling scheme should be more relevant."

I think you answer your own question: "Since the economy has indeed existed for only finite time, this may be of course important if it leads to quantitatively significant deviation from RE results"

The deviations are, in fact, huge (not merely significant).

"but it's not the same thing as proof that there is "no way for you to know the RE measure""

Sure it is. First, you can't know it exactly, and for some theories that's fatal. RBC macro, for example, is not stable away from the RE equilibrium. (Howitt 92). Second, it's not reasonable to assume that past drift rates are stable over sufficiently long periods to estimate them reliably. I.e. it takes many decades to estimate drift rates of macro variables (drift of consumption *and* drift of consumption volatility are critical), and there is no reason to assume that those drift rates will persist over the next many decades over which the asset pricing measure is relevant. For sensible models the fragility of the estimators is a dominant consideration.

Certainly, though, there are problems for which RE may be relevant. Early exercise of options, for example, since markets are relatively more complete and empirical drift rates don't matter under the risk neutral measure. Even there, though, there can be quite wide divergences in exercise behaviour, so it's questionable whether RE is a good approximation even under the most favourable circumstances.

K: Sampling given interval at increasing frequency is definitely not what people have in mind when they discuss convergence to rational expectations. Anyway, my point is that there's a difference between saying "agents cannot learn REE at all" and "agents will learn REE asymptotically, but given typical timescales asymptotic behavior is misleading".

DeleteHowitt shows that learning process is unstable in a model where central bank pegs interest rate at constant value. Since most DSGE models these days assume that CB instead follows some kind of Taylor rule (with coefficients chosen to yield determinacy), this is not very applicable result.

On the other hand, literature on adaptive learning (Evans & Honkapohja,...) shows that RE equilibria in NK and RBC models are typically learnable (though agents in those models usually ignore parameter uncertainty, which is a bit strange). And if drifts change over time, you can construct a model where agents learn and update their beliefs about hidden state by solving a filtering problem (e.g. Veronesi 1999 in asset pricing, though surely there must be some papers in macro too). I'm not really an expert on this, but suffice it to say, I think it's a bit more complicated than you say.

ivansml,

ReplyDeleteEvans and Honkepoja is exactly the kind of deviation from RE that CA was assuming above. I.e. errors and frictions. I agree that this is not the achilles heel of RE. The achilles heel is that "agents in those models usually ignore parameter uncertainty" as you say, which leads to the fundamental, inescapable and large deviations from RE.

Regarding Howitt '92, the implications for RBC are slightly more subtle than I implied. Howitt shows, that under very general conditions, if r is below r* that results in rising i, and vice versa. Once you permit that the real rate may differ from the RE equilibrium (the "natural rate"), and that any difference of r relative to r* results in a rising, not falling, difference, then you have a model in which the central bank can directly control the real rate via changes in the nominal rate and therefore the path of nominal rate has a direct impact on output, in direct contradiction of standard RBC. It also requires you to have a theory of the dynamics of prices and output *away* from the RE equilibrium, though it may be that you can do it without sticky prices (I'll admit). Anyways, it's a long and complex debate, and not really central to my point, which I'll let rest on the asset pricing literature.

Just my two-bit opinion, but maybe there's more money to be made in empirical work than theory? I don't see too many grants given to scholars who want to work out a deductive theory. Firms, think tanks, government, etc. probably prefer to have stats to back up whatever issue they support rather than complex theory which is difficult, if not impossible, to empirically prove. But you're going to get more support with a statistic than a theory.

ReplyDeleteHow many half-assed statistical studies are out there, all in an effort to further an agenda? Lies, damn lies and statistics - but hey, it works.

But again, just a theory.

Let me elaborate on the RBC stuff (can't help myself)...

ReplyDeleteIn an RBC world, the real rate is always at the RE equilibrium. That leaves two possible consequences for the nominal rate and the inflation rate. Either the inflation rate is endogenous and therefore the CB can't set the nominal rate. It has to be at r+pi. Or, the CB sets the nominal rate and therefore inflation just has to follow at pi = i-r. The second possibility seems to me to be totally destroyed by Howitt '92: when the CB raises the nominal rate inflation does *not* go up (Kocherlakota doesn't even believe it any more.) The first possibility, that the CB *can't* set the nominal rate, is just sort of ridiculous. Does anybody think that the Fed can't set IOR at 10%, and thus floor the FF rate at 10%? It's just silly, no? (I only raise the possibility because it's actually sometimes claimed, or implied, by RBC types, that the CB can't set rates.)

The way I see it, the only thing that can rescue most of RBC is the assumption that we are everywhere and always at the RE equilibrium. And intrinsic structural parameter uncertainty just kills that possibility.

http://www.edge.org/3rd_culture/anderson08/anderson08_index.html

ReplyDeleteI was waiting for someone else to point this out, but no one has. So even though the comments on this blog post are getting stale, let me point it out:

ReplyDeleteBehavioral Econ was WAY theoretical. In fact, in many ways, Behavioral Econ was the last hurrah of theoretical economics. Have you ever had the pleasure of being made to prove the existence of Von Neumann expected utility function from the Savage axioms? Well, prospect theory is like that except worse, with even more esoteric math (that's where measure theory and those Borel sets come in - I see Barkley did mention Aumann above). You want things more general, and funky, and you want it precise and formal... well, the math/theory is actually gonna get strange.

So if you're gonna go looking for Colonel Mustard and the candlestick, it wasn't Kahneman and it wasn't "irrationality". (It was Levitt and instrumental variables (joke))

The quantitative and the qualitative are merging and the complexity of things like the soul conjecture, so befuddle most laymen, that they neglect to be aware of its occurrence. Qualitative data has never been as available in size and scope as it is today and we've never had computers and software so able to query, poke and prod it as we are able today.

ReplyDeleteThe quantitative and the qualitative are merging such that art is becoming science and science is being realized as art.